Message queues are a software architecture problem that solves a lot of issues that distributed systems face. When I first started my career, I had to learn what a message brokering system (RabbitMQ, specifically) was and how I’d go about using it for the system I was building.

» If you’re interviewing for a software role, you’re going to want to know what message queues are - they may be asked in your system design interview!

What are messages?

If you’ve ever looked up an analogy for message queuing, you’ll often see the analogy of the mail system:

You write a letter to your friend - often referred to as the “publisher” or “producer”.

The post office is responsible for delivering it - often referred to as the “message queue”

Your friend receives the letter - generally referred to as the “consumer”.

Think of messages as the letter you’re sending to your friend - it contains something that your friend could use, whether if that would just be a nice little note or a gift card.

In software, these messages are dictionaries:

{

"task": "send_email",

"to": "[email protected]"

}What are message queues?

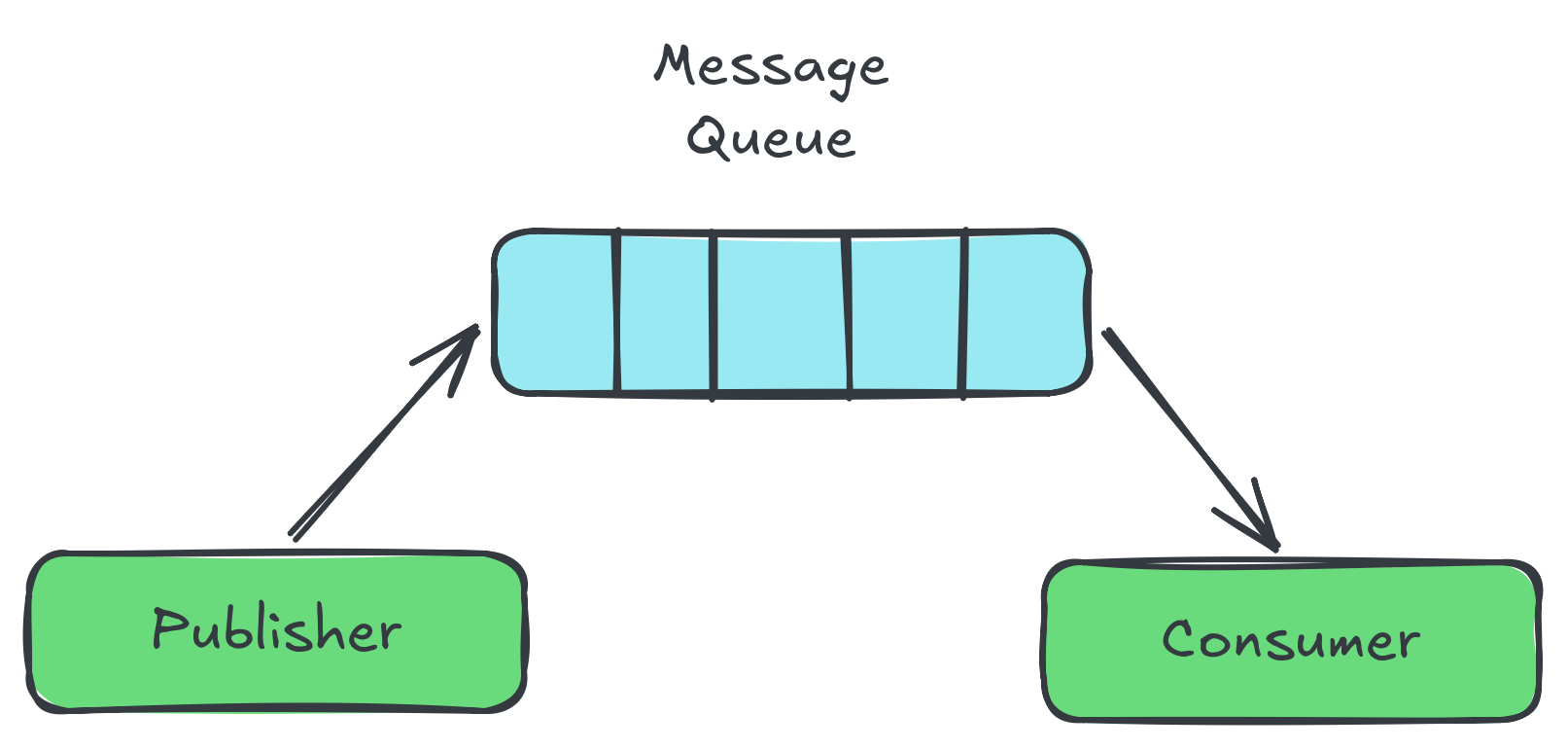

Message queues are like a “holding area” for your messages - publishers will send a message to this queue, and consumers will read the message:

» Related reading: what is a queue

This article is unavailable

This article is for Python Snacks Pro subscribers. Subscribe for free to gain access to this content. If you are a Pro member already, please sign into your account to access this article.

Join Python Snacks ProPro members get the following benefits:

- Unlimited access to all articles on the webiste

- Join a community full of Python developers

- Access to all asynchornous coding challenges